Question Mining for AI Search in 2026

You can rank for thousands of keywords and still lose deals to the same three unanswered questions. Question mining is how you fix that.

By Ronan Leonard, Founder, Intelligent Resourcing

Question mining for AI search is the process of collecting real buyer questions from sales calls, support tickets, reviews, Reddit, on-site search, and chat, then clustering them by intent and turning them into canonical answers. Done well, it gives your business reusable answers that work across organic search, AI search, service pages, FAQs, sales enablement, and customer education.

Mine buyer questions to uncover real objections, comparisons, and decision friction.

Group questions by intent to organise messy inputs into usable themes.

Create one canonical answer to give each key question a clear, consistent response.

Reuse answers across channels to support SEO, AI search, service pages, FAQs, sales, and customer education.

Systemise the process to reduce duplication, improve clarity, and scale answer reuse.

Criteria | Traditional keyword research | Question mining |

Primary input | Search terms and topic variants | Real buyer questions and objections |

Main goal | Rank pages for topics | Build reusable answers for decisions |

Best signal captured | Demand language | Intent, friction, doubt, comparison |

AI search fit | Moderate | Strong |

Best use case | Broad topic coverage | Commercial, answer-led content |

Main risk | Generic copy | Messy process without ownership |

If your goal is broad topic coverage, then keyword research alone may be enough but, if your goal is to create content that reflects real buyer thinking, supports self-service research and gives AI systems cleaner answers to reuse, then question mining is the stronger approach.

“Question mining is the discipline of systematically identifying the questions that determine whether someone chooses, hesitates, or walks away.” Josh Grant

What is question mining for AI search?

Question mining for AI search is the process of identifying the exact questions buyers ask and turning them into clear structured answers that AI systems can easily extract and surface.

Instead of asking which keywords to target, question mining starts with a different prompt: what exact questions are slowing a buyer down. This shift changes how content is planned and written. That shift changes how content is planned and written, and it is a core part of The GEO Engine, which helps brands turn buyer questions into structured answers that can be surfaced and cited in AI search.

Keywords describe topics. Questions describe decisions.

This distinction matters in AI search because these systems increasingly favour content that is easy to interpret, summarise and cite. A page that broadly discusses a topic can be less useful than a section that directly answers a specific question. Relevance depends on precision and extractability.

Question mining is not just an SEO tactic. It is an answer system discipline.

In practice, question mining means taking messy buyer language from sales, support and search and converting it into a stable set of canonical questions and answers. These answers are written to be clear, direct and reusable across contexts.

The result is an answer library. This is a structured collection of decision focused responses that can be reused across pages, help content and AI visible formats where buyers learn, compare and validate.

What’s changed?

The buying journey now starts in the answer layer, not with sales.

The answer layer now carries more weight because buyers are doing more research before they ever speak to sales. 87% now demand a self-service path, using AI to summarize vendor capabilities and compare pricing before they will agree to a demo.That means content now has to explain, reassure and compare earlier in the journey.

Sales influence now happens later in the process because buyers are forming stronger opinions before vendor engagement. That increases the value of discoverable, trusted, answer led content that can shape perception before a rep gets involved.

B2B research is also spread across more touchpoints. Buyers now use 10 or more channels during the purchase journey, which raises the need for platform-specific content across AI search, peer review sites, LinkedIn and your own site.

These shifts change the job of content. It is no longer there to support the sales conversation after interest exists. It now has to earn consideration before sales enters, by answering the questions buyers are already asking across the platforms they already trust.

Why keyword research alone is no longer enough

Keyword research is still useful, but it is no longer enough on its own. It helps you understand category language, search demand, and topic coverage. It is still part of the stack.

The problem is that keyword research usually tells you what market the buyer is in, not what decision they are trying to make.

Keyword data gives you the topic. Buyer questions give you tension.

A keyword like “AI search optimisation” tells you the subject area. A buyer question like “How do we make our service pages reusable in AI answers without creating duplicate content?” tells you something far more commercially useful: the implementation concern, the risk, the desired outcome and the likely next action. That is closer to revenue because it reflects the real decision friction behind the search.

Where the best buyer questions actually come from

The best buyer questions usually already exist inside your business.

Most teams do not need another tool before they start question mining. They need a better way to collect, sort and reuse the questions buyers already ask across different channels.

Sales calls: where commercial hesitation shows up first

Sales calls are often the strongest source because this is where real objections appear in plain language. Buyers ask about fit, timing, risk, cost and alternatives when they are close enough to care.

Support tickets: where implementation friction becomes visible

Support tickets matter because they show what happens after interest turns into use. They reveal where expectations break, where confusion starts and what practical issues buyers need explained more clearly.

Reviews and testimonials: where trust signals become public

Reviews and testimonials show which questions mattered enough for customers to mention publicly. They often highlight the proof points, concerns and decision factors that shaped confidence.

Reddit, forums and communities: where language gets more honest

Reddit, forums and online communities are useful because people tend to speak more candidly there than they do on brand-owned channels. This is where you often find raw wording, unfiltered frustrations and sharper comparisons.

On-site search and chatbot logs: where expectation gaps appear

On-site search and chatbot logs show the language people use when they expect your site to help them immediately. These sources are useful because they expose what visitors expected to find but could not find quickly.

What each source gives you

Sales calls: commercial hesitation

Support tickets: implementation friction

Reviews and testimonials: trust signals

Reddit, forums and communities: unfiltered language

On-site search and chatbot logs: expectation gaps

Each source tells you something different, but together they give you a much sharper picture of what your market actually needs explained. That is what makes question mining useful: it replaces guesswork with evidence from the places buyers already reveal intent and doubt.

Question mining vs keyword research vs intent data

These methods are not interchangeable.

Keyword research tells you how the market labels a topic. Intent data tells you which accounts may be moving. Question mining tells you what needs to be explained for movement to happen.

That is why the strongest strategy usually combines all three. Use keyword research to understand category demand. Use intent signals to prioritise which segments or accounts matter now. Use question mining to decide what answers need to exist across your site and sales process.

If keyword research names the shelf, and intent data tells you who is walking towards it, question mining tells you what they need to hear before they pick a product up.

The question mining workflow

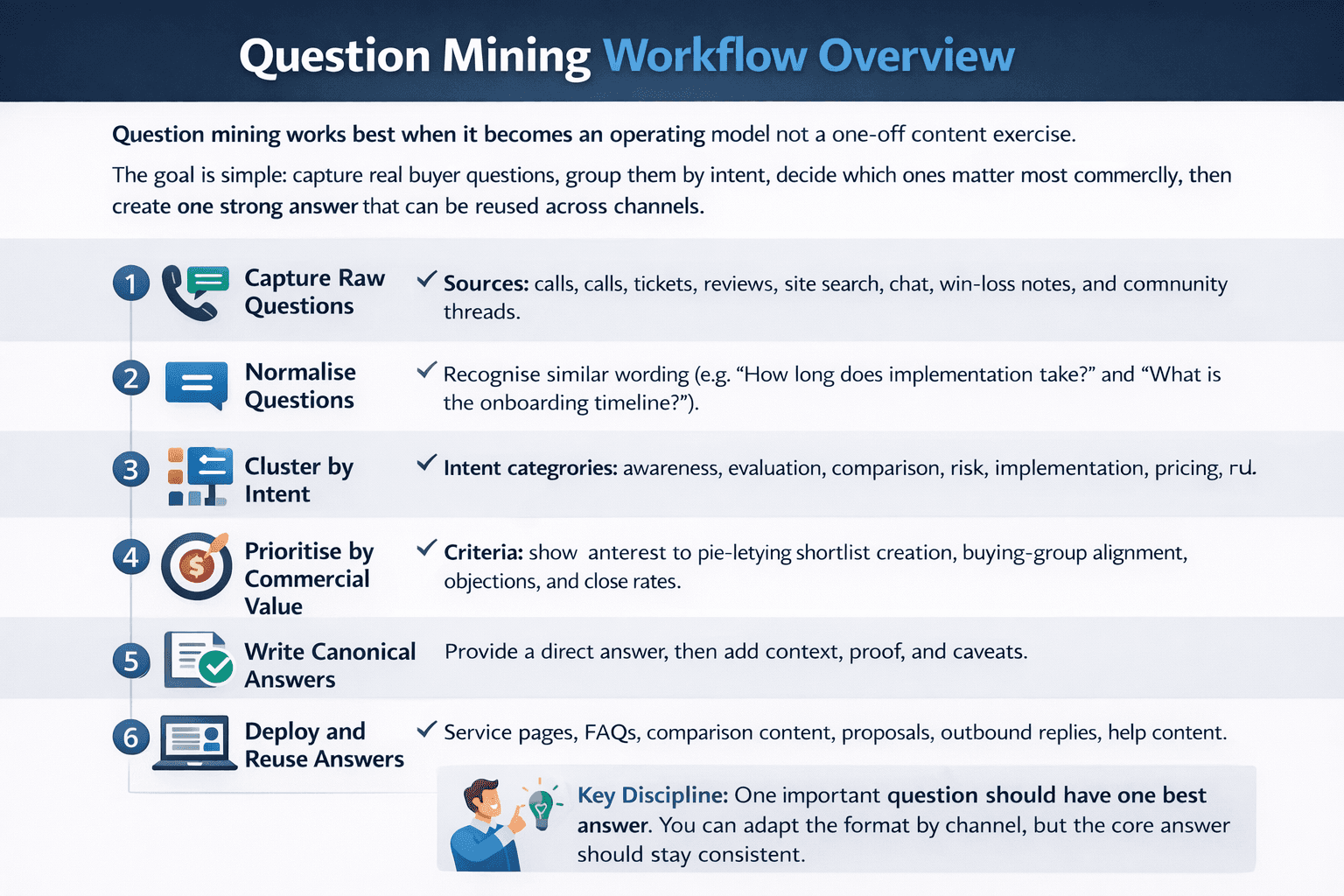

Question mining works best when it becomes an operating model, not a one-off content exercise.

The goal is simple: capture real buyer questions, group them by intent, decide which ones matter most commercially, then create one strong answer that can be reused across channels.

Capture raw questions from calls, tickets, reviews, site search, chat, win-loss notes, and community threads.

Normalise similar wording so “How long does implementation take?” and “What is the onboarding timeline?” are recognised as the same intent.

Cluster by intent such as awareness, evaluation, comparison, risk, implementation, pricing, and fit.

Prioritise by commercial value by asking which questions influence shortlist creation, buying-group alignment, objections, and close rates.

Write one canonical answer for each priority question in plain language, with a direct answer first, then context, proof, and caveats.

Deploy and reuse the answer across service pages, FAQs, comparison content, proposals, outbound replies, and help content.

The key discipline is this: one important question should have one best answer. You can adapt the format by channel, but the core answer should stay consistent.

How to turn mined questions into canonical answers

A canonical answer is not a long essay. It is the clearest version of the truth you can publish.

Start with a direct answer in plain language. Then expand with the explanation, proof, trade-offs, and examples a buyer needs to feel confident. That same answer can often be reused in a page intro, an FAQ block, a comparison page, a proposal section, or a support article.

A useful rule is to give a mined question its own page when it has high commercial intent, meaningful search demand, or enough complexity to require proof and nuance. Keep it inside an FAQ block when it mainly supports a larger page and does not need its own journey.

Where to deploy question-mined answers

Question mining provides maximum value through consistent answer reuse across the customer journey rather than simple data collection.

On service pages, mined answers help you explain fit, process, timelines, risks, and expected outcomes. On comparison pages, they help you handle alternatives, migration questions, pricing logic, and category trade-offs. In FAQ blocks, they capture recurring objections without forcing every subtopic into its own article.

Common mistakes in question mining for AI search

The most critical mistake in question mining is treating every query as a standalone blog post, which creates content silos and inconsistent messaging across departments.

Content Duplication

Treating every question like a fresh blog idea. That usually creates duplication, weak pages, and inconsistent explanations.

Messaging Inconsistency

Writing different answers to the same question across different teams. Marketing says one thing, sales says another, and support explains a third version. That inconsistency weakens trust.

Avoiding Hard Questions

Most valuable questions are often uncomfortable: “Why would this fail?”, “What does migration really involve?”, “Who should not buy this?”, or “How is this different from hiring internally?” Those are the questions that influence real decisions.

Build question mining in-house or get expert support?

Build it in-house when you already have access to source data, clear owners across sales and support, and a team that can maintain the answer library over time.

Bring in outside support when the raw material is fragmented, teams disagree on positioning, or you need to turn scattered buyer language into a structured publishing system quickly.

A hybrid model is often the most practical. Internal teams contribute the real questions. A specialist partner turns them into canonical answers, page structures, internal taxonomies, and reuse rules.

That is where Intelligent Resourcing fits. The real opportunity is not producing more content. It is turning disorganised buyer language into an answer system that improves discoverability, trust, and conversion across channels.

Why question mining gives Intelligent Resourcing clients an edge:

Question mining is especially valuable for businesses that sell something nuanced, operational, or hard to explain in a single headline.

Instead of publishing generic top-of-funnel content, Intelligent Resourcing can help businesses identify the questions that actually shape shortlist decisions, stakeholder alignment, and implementation confidence. That produces content which is more commercially useful, more reusable across teams, and more aligned with how AI search surfaces answers.

In other words, the advantage is not volume. It is clarity with structure.

FAQs

What is question mining for AI search?

It is the practice of collecting real buyer questions from customer-facing channels, clustering them by intent, and turning them into canonical answers that can be reused across search, AI answers, service pages, FAQs, and sales content.

How is question mining different from keyword research?

Keyword research tells you what topic people search around. Question mining tells you what they need explained before they trust, shortlist, or buy. The two work best together, but they solve different problems.

Where should you collect buyer questions from?

Start with sales calls, support tickets, reviews, Reddit and community discussions, on-site search, chatbot logs, and win-loss notes. Those sources usually contain more commercially useful language than keyword tools alone.

What makes a good canonical answer?

A good canonical answer is direct, specific, easy to reuse, and honest about trade-offs. It answers the core question first, then adds the context and proof needed for confidence.

Can question mining improve both SEO and AI search visibility?

Yes. It improves SEO because pages become more aligned with real buyer language and intent. It improves AI search visibility because answers become cleaner, more extractable, and easier to reuse in answer-generation contexts.

How often should you refresh question clusters?

Refresh them whenever buyer language shifts, your offer changes, or new objections appear in calls and support. For active categories, a quarterly review is usually sensible.