AI Visibility vs Rankings : How to Measure Where Your Brand Actually Appears

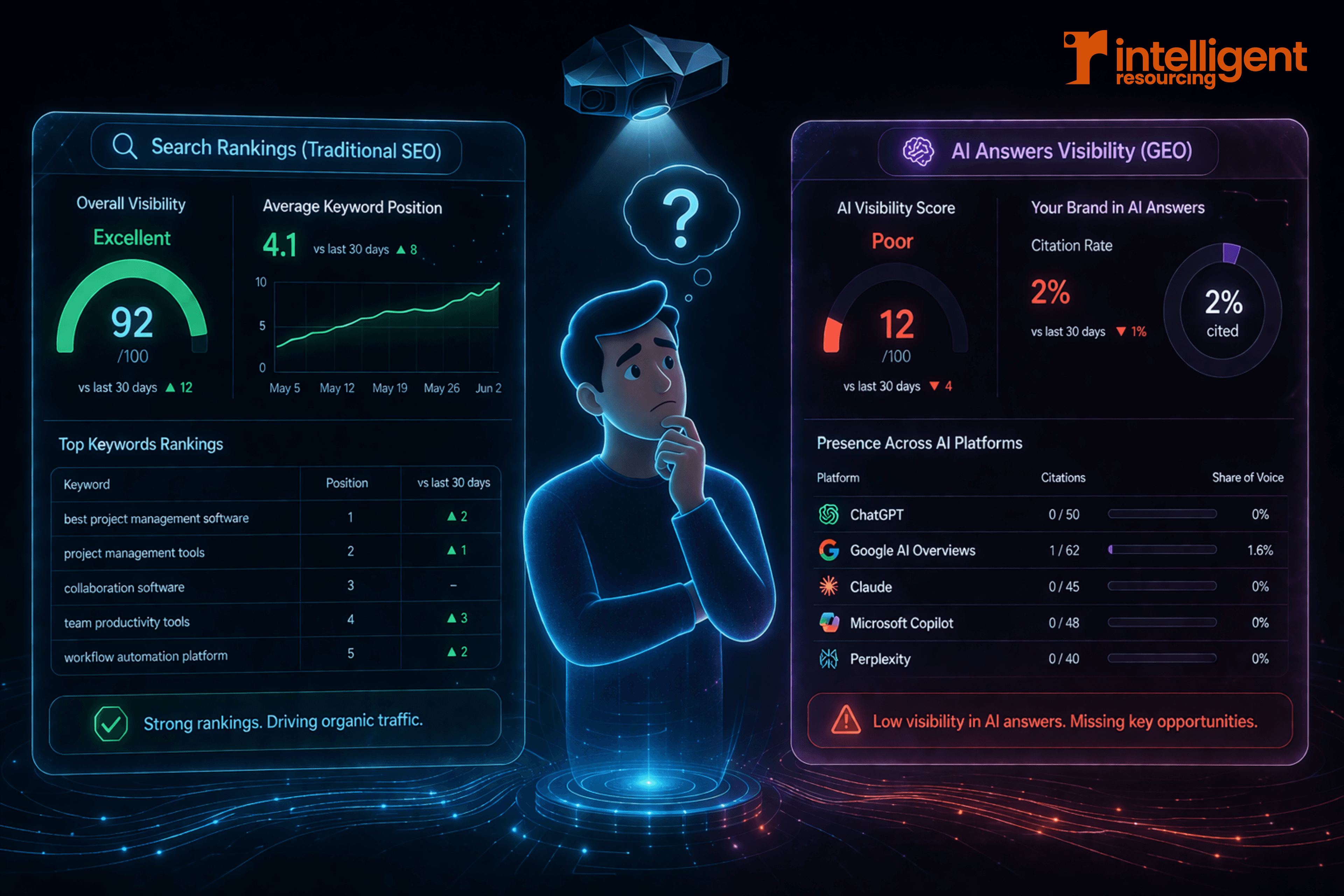

Your rank report looks healthy but your brand may be invisible in AI answers. Here is how to audit citation rate.

By Ronan Leonard, Founder, Intelligent Resourcing

AI visibility is the measure of how often your brand appears, gets cited and earns traffic across AI-generated answers. Rankings alone do not capture it. A proper audit combines citation rate, Share of Voice, referral traffic, source-page visits and competitive benchmarking across the AI platforms your buyers actually use.

Track citations to see whether AI engines actually use your pages as sources.

Measure Share of Voice to compare your answer-layer presence against the competitors buyers also see.

Group prompts by buyer intent to audit visibility across pricing, comparisons, implementation, proof and vendor-fit questions.

Link visibility to page value so you know whether cited or referred pages support pipeline, not just traffic.

Separate direct signals from proxies so referral traffic, cited pages and answer presence are not treated as the same evidence.

Use the audit to fix gaps by improving weak pages, coverage and source selection in the prompt clusters that matter most.

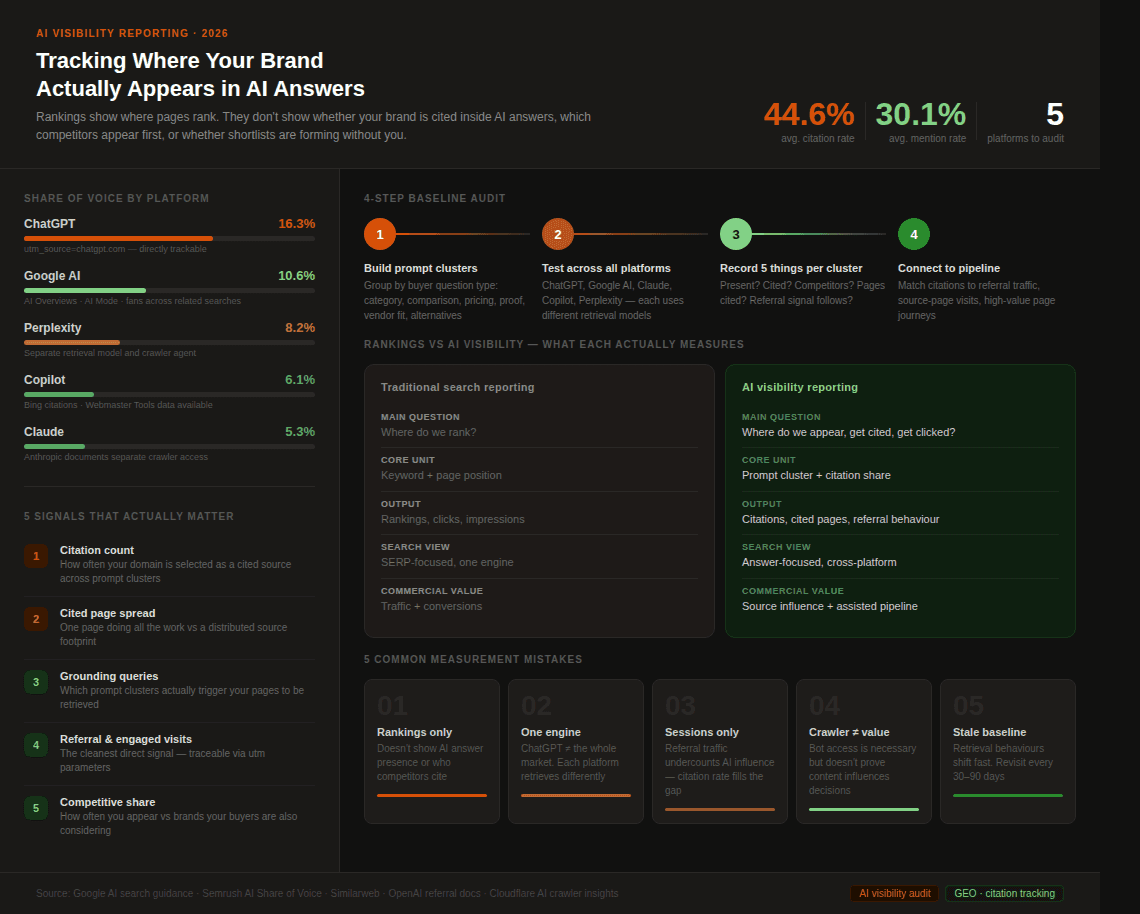

Criteria | Traditional search reporting | AI visibility reporting |

Main question | Where do we rank? | Where do we appear, get cited, and get clicked? |

Main unit | Keyword and page position | Prompt cluster, answer presence, citation, competitor share |

Core output | Rankings, clicks, impressions | Citations, answer placement, cited pages, referral behaviour |

Search view | SERP-focused | Answer-focused and cross-platform |

Best benchmark | Position over time | Presence and citation share versus competitors |

Commercial value | Traffic and conversions | Traffic, source-page influence, assisted pipeline |

If you build an AI visibility audit that tracks citation rate, Share of Voice, referral traffic and source-page visits across platforms, then you have a reliable signal for whether your content is shaping buyer decisions before the click.

If you rely on rank reports alone, then your brand can look healthy in search while remaining completely absent from the answer environments where shortlists are actually being formed.

What “AI visibility” actually means

AI visibility is the measure of how often, how prominently and how usefully your brand appears across AI-generated answers and cited source paths. It is broader than traffic and broader than mentions. A brand can rank on page one and still be absent from the answer layer entirely. That gap is what AI visibility measurement is designed to close.

The reason is simple. AI search experiences can retrieve, combine and cite sources differently from a standard search result. That means answer visibility can diverge from what a marketer sees in a normal rankings report.

That is why AI visibility sits inside generative engine optimisation rather than inside a standard SEO report. The question is not only where your pages rank. It is whether your brand is present where answers are being formed.

Rankings vs AI visibility

Rankings show where a page appears in conventional search. They do not show whether your brand is cited inside an AI answer, whether a competitor is being recommended first, or whether the highest-value buyer questions are being answered without your brand present at all.

Google's AI search guidance explains the gap directly. AI features can fan out across related searches and surface a broader set of supporting links than a normal web search. A page can rank well and still be absent from the answer layer entirely.

Similarweb's AI brand visibility research reinforces the measurement distinction. Tracking AI visibility means understanding how often content is cited, which pages are cited and which queries triggered those citations. That is a fundamentally different measurement problem from rank tracking.

A page can be discoverable but not selected as a source. It can be relevant but not clear enough to reuse in an answer. That is why AI search marketing and AI visibility reporting need to work together rather than being treated as separate conversations.

The five signals that actually matter

A useful AI visibility framework should not rely on one number alone. It should combine five signals.

1) Citation count

Citation rate measures how often your brand or domain appears as a cited source across a defined set of prompts. It is the most direct answer-layer metric because it tracks whether AI systems are actually selecting your content as supporting evidence.

2) Average cited pages

Cited page spread shows whether one page is doing all the work or whether visibility is distributed across a wider source footprint. A broader cited-page base usually indicates stronger topical depth and less dependence on a single asset. This is a strategic inference from current citation research rather than a published platform formula.

3) Grounding queries or prompt clusters

Grounding queries show which kinds of phrases and retrieval paths are actually causing your pages to be used. Prompt-cluster visibility is often more useful than isolated keyword visibility because answer engines tend to retrieve around question themes rather than one exact search phrase.

4) Referral and engaged visits

Referrals remain one of the cleanest direct business signals. OpenAI says ChatGPT automatically includes utm_source=chatgpt.com in referral URLs, which makes inbound ChatGPT traffic trackable in analytics platforms. That does not capture all AI influence, but it does capture one important downstream signal.

5) Share of Voice

Share of Voice is your competitive share of visibility across a defined set of prompt clusters and AI platforms. It shows not only whether you appear, but how often you appear relative to the brands your buyers are also considering.

That is why Share of Voice belongs alongside citation count rather than sitting in a separate reporting silo. AI visibility is the wider measurement system. Share of Voice is one of the key metrics inside it.

How to run a baseline AI visibility audit

A baseline AI visibility audit follows four steps. Start with prompt clusters, test across platforms, record what you find and connect it to traffic behaviour.

Step 1. Build prompt clusters

Group prompts by buyer question type: category understanding, comparison, pricing, implementation, proof, vendor fit and alternatives. Auditing by cluster gives a more accurate picture than testing isolated keywords.

Step 2. Test across the platforms your buyers actually use

For most B2B teams that means ChatGPT, Google's AI search experiences, Claude, Copilot and Perplexity.

Step 3. Record five things for each prompt cluster

For every cluster, note: whether your brand appears, whether it is cited, which competitors appear, which pages are cited and whether any referral or engagement signal follows. That five-point record gives you a repeatable baseline you can benchmark over time.

Step 4. Connect answer-level observations to traffic and page behaviour

Match visible citations to referral traffic, source-page visits and high-value page journeys where possible. The point of the audit is not to prove your brand was mentioned. It is to understand whether visibility is happening on the pages and questions that matter commercially.

What a useful AI visibility dashboard looks like

A good AI visibility dashboard does not try to capture everything in one number. It shows performance from multiple angles so you can see where visibility is strong, where it is weak, and where it is moving.

At minimum, build five views into your dashboard:

Platform view: where visibility is strongest or weakest by engine

Prompt-cluster view: which buyer-question themes are working

Page-level citation view: which URLs are being used as sources

Competitor benchmark view: who appears most often in the same answer environments

Visibility-to-engagement view: whether answer-layer presence leads to useful visits or actions

Separate direct signals from proxy signals. Direct signals include referral traffic from ChatGPT and cited page data where available. Proxy signals include share of voice, platform answer coverage, and traffic changes on high-value pages that rise without a clear attribution path. Both matter. They just should not be treated as the same type of evidence.

Google Search Console is useful as supporting context. The branded queries filter separates branded from non-branded performance, and query groups cluster similar searches into topic-level views. Neither feature measures AI visibility directly, but both help explain branded lift and demand capture around your AI work.

Cloudflare’s AI crawler insights are useful for the access and diagnostic layer. They show which crawlers are active on your site and how your category compares. That is not a citation report, but it is valuable context for access, crawl purpose, and crawler hygiene.

How to connect AI visibility to business value

AI visibility only becomes commercially useful when it connects to pages and behaviour that matter. The starting point is page-level business intent.

Track which cited or referred pages are being surfaced. A visit to a service page, comparison page or pricing page carries more weight than a visit to a generic article.

The next layer is pre-click influence. Users spend more of the buying journey inside AI experiences before a visit ever occurs. That means the most valuable signals are often visibility signals such as citations and answer presence, not just clicks. AI visibility is partly a persuasion metric, not only a traffic metric.

The commercial question is not just whether you got a click. It is whether your brand was present when the answer was formed and whether the right pages were involved. That is why ChatGPT search optimisation belongs inside the wider measurement conversation rather than sitting outside it.

Common Mistakes Teams Make When Measuring AI Visibility

Most teams make the same five mistakes when measuring AI visibility.

1. Relying only on rankings

Rankings show where a page appears in conventional search. They do not show whether your brand is cited inside AI answers or whether a competitor is being recommended first. Answer environments retrieve more broadly and cite more selectively than classic search alone.

2. Treating one engine as the whole market

ChatGPT matters but it is not the whole picture. Anthropic and Perplexity both document separate retrieval models and user agents. Cross-platform auditing is not optional if your buyers use more than one answer tool, and most do.

3. Tracking sessions but not citations

Referral traffic only measures the clicks you receive, not the recommendation layer itself. Citation rate, cited page spread and share of voice give the missing context. Sessions alone will always undercount AI influence.

4. Confusing crawler activity with business value

Crawler data shows which bots are accessing your site. It does not prove your content is being cited or influencing decisions. Access is necessary but it is not sufficient on its own.

5. Never refreshing the baseline

AI interfaces, retrieval behaviours and prompt patterns change quickly. An audit is only useful if it is revisited often enough to show movement across the questions that matter. A 30 to 90 day cadence is a sensible starting point.

What to Do After the Audit

An audit is only useful if it leads to action. Three steps follow every baseline audit in order.

Step 1. Fix access and coverage gaps

If the relevant bots cannot reach the relevant pages, everything else will underperform. Check crawler permissions for each platform you tested. Anthropic and Perplexity both make crawler-level access explicit in their documentation. Cloudflare's AI crawler insights can help you understand which bots are active and where access gaps exist.

Step 2. Strengthen the pages that are weak or absent in your highest-value prompt clusters.

The audit tells you where visibility is missing. The next step is to improve those specific pages: clearer answers, better structure, stronger proof and cleaner schema. Earlier articles in this cluster cover work through ChatGPT search optimisation and AI search marketing. The audit is what tells you where to apply the work first.

Step 3. Monitor on a regular cadence

A 30 to 90 day review rhythm is sensible for most B2B teams. Query clusters evolve, platforms change and source selection shifts over time. The point is not to admire the dashboard. It is to use the data to improve coverage where the business impact is highest.

FAQs

What is AI visibility?

AI visibility is the measure of how often and how prominently your brand appears in AI-generated answers and their cited source paths. It is broader than rankings because it includes answer inclusion, citation frequency, competitor context, and downstream value.

Is AI visibility the same as AI referral traffic?

No. Referral traffic is one direct signal, but it only captures the visits that reach your site. AI visibility also includes the answers where your brand is present, cited, or absent, even when no click happens. OpenAI’s referral tracking helps with the traffic part, while Microsoft’s AI citation reporting and visibility-share frameworks help with the broader visibility part.

Which platforms should an audit include?

At minimum, include the answer environments your buyers actually use. That usually means some mix of ChatGPT, Google’s AI search experiences, Claude, Copilot, and Perplexity. The official documentation shows these systems use different retrieval and crawler models, so a cross-platform view is safer than relying on one engine alone.

Can AI visibility be measured perfectly?

No. Some of it is directly measurable, such as ChatGPT referral traffic or AI citations in Bing Webmaster Tools. Some of it is proxy-based, such as share of voice, prompt-cluster coverage, or pre-click influence. A good measurement system accepts that mix instead of pretending every AI interaction is fully trackable.

What should a team track first?

Start with citation count, cited pages, grounding-query clusters, referral traffic, and competitor coverage in the most commercially important prompt clusters. That gives you a practical baseline before you add more detailed reporting layers. This is a synthesis of current platform documentation and visibility-framework guidance.

Conclusions

Tracking rankings still matters but it no longer tells the whole story.

The brands that win in AI search are not only the ones with discoverable pages. They are the ones that appear consistently across the right answer environments, get cited for the right prompts and turn that presence into useful page visits and commercial influence over time.

Do not just measure whether your page ranks. Measure whether your brand is present where AI answers are shaping the decision.